This post was sponsored by Books Forward and may also include affiliate links which means we make a small commission on any sales. All opinions are my own. Partnerships like these help us to pay our staff and to keep feminist media independent!

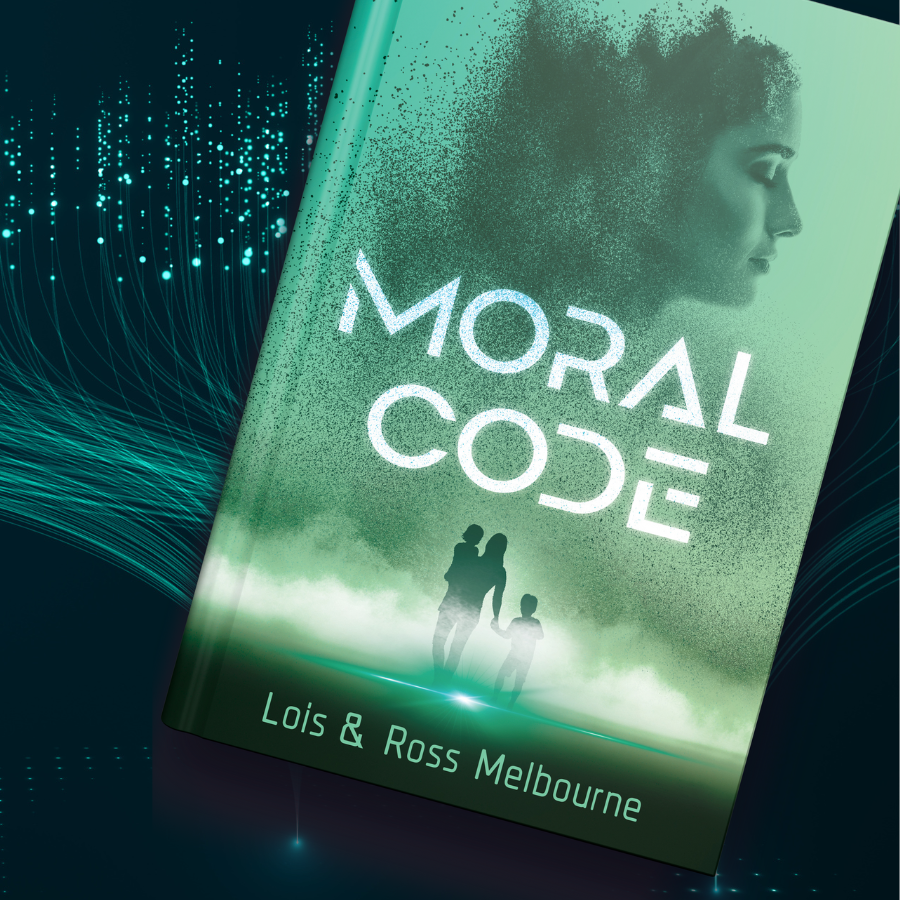

Moral Code is an ambitious science fiction novel that contends with the question of how to make the most of artificial intelligence (AI) while ensuring it does not forgo ethics and morality in its actions and decision-making. There’s no doubt that we’re living in a time when AI seems to be everywhere, making this a particularly timely read.

Setting aside my growing fear of AI for a bit, I interviewed the authors of Moral Code, Lois and Ross Melbourne, to talk about the book, technology, and the causes they’re supporting through the proceeds of this book.

SM: What inspired you to write Moral Code?

Lois: We were discussing artificial intelligence and the dilemmas faced in making their decisions ethical. It turned into a discussion about how cool a book and movie would be about the most ethical AI being driven to protect kids. The chat launched into the better part of a Sunday spent brainstorming book concepts.

SM: How much did you draw from your own background in technology when writing this, and how close to reality (or future reality) is the tech featured in the book?

Lois: Ross is the technologist and futurist. He gave me tech lessons, which built upon my existing understanding and curiosity around AI and other technologies. I’d then dive deeper into research to help me create the imagery and usefulness. An example of how close to reality we were hitting became obvious when we’d dream up future uses or features and then MIT or some other research facility would announce something similar. This happened more than once and required rewrites and the tightening of the story’s timeline.

Ross: I gave her too much technology. We got feedback from early drafts to balance the tech with the storytelling. Lois figured out how to do that and make the story accessible to non-techies.

SM: This book dives into the ethics and morality of artificial intelligence, which is something we’re contending with right now in real time, and yet there is so much potential for AI to have a positive social impact. What are your thoughts on how we can ensure AI is making a positive impact without causing more harm?

Lois: I believe ethics and the impact a technology can have on its users and society needs to be in the forefront of all designs and boardroom discussions. Technologists and companies need to be very mindful and transparent about the sources of data they use to train their AIs, as well as what they are doing to put guardrails around the training to mitigate bias and harmful output.

In some methods of training AIs, you can equate it to training a dog. You may need both positive and negative reinforcement to get the right results. If you never tell a dog “no” you will have an unruly dog.

SM: Keira has a strong moral compass and it guides her decision-making along the way. This adds another layer to her character, as she’s also a woman in a male-dominated field. Can you share how you designed her character?

Lois: I know a lot of strong women. They have been shaped by every bump in their journey, the people they surround themselves with and their successes. I considered Keira’s backstory and her experiences during the story’s timeline to shape her drive and her motivations. I purposely didn’t make her a mother because I wanted her to be able to focus on approaches that would impact all kids. I didn’t want to shift the focus to a home front, which was easier without her having children.

SM: It’s so cool that the proceeds generated through the sales of Moral Code are donated to help fight the child abuse and human trafficking of kids. Why was this important to you?

Lois and Ross: 1.2 million kids are affected by trafficking for forced labor globally at any moment. Around 46 kids are sold into trafficking daily in the U.S. alone. It’s deplorable. A lot of people think it’s only a problem outside the U.S., but it’s going on right here too. Non-trafficking child abuse is a difficult statistic to track also. Abuse is kept a secret far too often. These kids suffer greatly, and society suffers too. Abuse often creates long lasting mental health issues and a cycle of abuse for future generations. We donate the proceeds to Thorn and to Prevent Child Abuse America, to do a small part in helping these amazing organizations fight against these terrors.

SM: What do you want readers to take away from Moral Code?

Lois: There are multiple layers to Moral Code. I love normalizing strong women in STEM as my characters and their efforts to make a difference, especially for kids. It would be great if we inspire readers to consider how AI can be a powerful, helpful tool, not a doomsday tech. Maybe they will support those efforts or invent them. It’s common to hear how StarTrek’s tricorder inspired the creation of the flip phone. Maybe one day Moral Code will be known for inspiring the creation of the most ethical AI. I may have grandiose aspirations, but you asked.

Thanks again to Lois and Ross for their time and for sponsoring this post. Visit moralcodethebook.com to learn more and to download a free chapter.

This interview made me even more excited to read Moral Code! It’s amazing to see a sci-fi story tackle real questions about AI, ethics, and our responsibility to protect the vulnerable—especially kids. I love that Lois and Ross are using their book not just to spark important conversations but also to help fight child abuse and trafficking in real life. It’s powerful to see strong women in STEM characters like Keira, too. Thank you for sharing your vision and using your story for good. Can’t wait to dive in!